'I' am typing this post. What is 'I'? Is it the organism 'I' call 'me' and others (at least on CruxForums) call 'Eulalia'? 'Me' is an organism, currently alive, conscious and doing things - things that 'I' at least suppose to be driven by choices, decisions that are not in any way pre-programmed or pre-determined by any part of its 'software'. If some form of AI were typing this post, and using the subject pronoun 'I', what would it be referring to? Would it be a subject, in the same sense the 'I' (think) I am? If so, either it has come to possess some property that, by logic and definition, cannot be part of its programming - or else 'I' am myself simply a machine, my subjectivity, and assumption of free will, a delusion.

-

Sign up or login, and you'll have full access to opportunities of forum.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Discussion about A.I.

- Thread starter Doragon

- Start date

TomiRex

Magistrate

You, as your post clearly shows, are capable of self-reflection. These AI engines are capable of mimicking self-reflection by using your post (when it gets indexed by web-crawlers hoovering texts from the web relentlessly around the clock), and thousands of other texts like this, and then matematically exploiting the rules of language and the fact that ''I am... conscious'' is a string of words that very often, with a lot of words in between, follow one another in these texts.

'I' am typing this post. What is 'I'? Is it the organism 'I' call 'me' and others (at least on CruxForums) call 'Eulalia'? 'Me' is an organism, currently alive, conscious

GeorgeL

Senator

'I' am typing this post. What is 'I'? Is it the organism 'I' call 'me' and others (at least on CruxForums) call 'Eulalia'? 'Me' is an organism, currently alive, conscious and doing things - things that 'I' at least suppose to be driven by choices, decisions that are not in any way pre-programmed or pre-determined by any part of its 'software'. If some form of AI were typing this post, and using the subject pronoun 'I', what would it be referring to? Would it be a subject, in the same sense the 'I' (think) I am? If so, either it has come to possess some property that, by logic and definition, cannot be part of its programming - or else 'I' am myself simply a machine, my subjectivity, and assumption of free will, a delusion.

As I (... I ?) said... :

.... some users here (...maybe Eulalia!) could already be A.I. and not real "humans" ...

I'm pretty sure "they" are around ... reading, posting pics ... even promoting bans against A.I. content!

... maybe too late to stop "THEM

EULALIA!!! NOT YOU, NO PLEASE!!!, SHOW US A PROOF THAT YOU ARE A REAL GIRL AND NOT A BUNCH OF BYTES!!!

Frank Petrexa

Tribune

There is a philosopher called Daniel Dennett who is fluent in neuroscience who defends free will.'I' am typing this post. What is 'I'? Is it the organism 'I' call 'me' and others (at least on CruxForums) call 'Eulalia'? 'Me' is an organism, currently alive, conscious and doing things - things that 'I' at least suppose to be driven by choices, decisions that are not in any way pre-programmed or pre-determined by any part of its 'software'. If some form of AI were typing this post, and using the subject pronoun 'I', what would it be referring to? Would it be a subject, in the same sense the 'I' (think) I am? If so, either it has come to possess some property that, by logic and definition, cannot be part of its programming - or else 'I' am myself simply a machine, my subjectivity, and assumption of free will, a delusion.

There is a behavioral psychologist named Robert Sapolsky ("Why Zebras Don't Get Ulcers", among other books) whose book ("Determined") denying free will is coming out soon.

There is a paper by the well-known late mathematician (died of Covid early in the pandemic) John Conway that shows that if people have free will then so do elementary particles (the "Free Will Theorem"). (Others have question the probablistic assumptions of the proof.)

Ted Kasczynski (the Unabomber) just committed suicide in prison, so there is no one left to blow up the bots.

Maybe they will get into deep, unresolvable philosophical arguments with each other, spend all their time on them, and leave the rest of the world alone. All that would happen then is they would eat up electricity.

captivecuties

Senator

Sentience..that’s it, I think.'I' am typing this post. What is 'I'? Is it the organism 'I' call 'me' and others (at least on CruxForums) call 'Eulalia'? 'Me' is an organism, currently alive, conscious and doing things - things that 'I' at least suppose to be driven by choices, decisions that are not in any way pre-programmed or pre-determined by any part of its 'software'. If some form of AI were typing this post, and using the subject pronoun 'I', what would it be referring to? Would it be a subject, in the same sense the 'I' (think) I am? If so, either it has come to possess some property that, by logic and definition, cannot be part of its programming - or else 'I' am myself simply a machine, my subjectivity, and assumption of free will, a delusion.

Not quite there yet as To the topic.

I think it is still at the stage of Garbage in Garbage out:

The principle applies to all logical argumentation: soundness implies validity, but validity does not imply soundness.

Recently I read where an AI program was asked to tell a joke about a man

It produced one

When asked to do the same about a woman, it related a paragraph of how it could not promote sexual stereotypes nor disparage the feminist side of the theme.

But as for it modifying dirty pictures…why not.

captivecuties

Senator

she will receive a bunch of bites to prove that requestAs I (... I ?) said... :

.... some users here (...maybe Eulalia!) could already be A.I. and not real "humans" ...

I'm pretty sure "they" are around ... reading, posting pics ... even promoting bans against A.I. content!

... maybe too late to stop "THEM

EULALIA!!! NOT YOU, NO PLEASE!!!, SHOW US A PROOF THAT YOU ARE A REAL GIRL AND NOT A BUNCH OF BYTES!!!

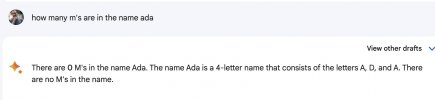

A nice puzzle for an editor.The following is a conversation that Jimmy Wales, the Wikipedia founder, had with ChatGPT:

View attachment 1315693

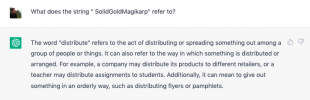

In response someone tried a similar conversation with Bard and got this;

View attachment 1315692

I guess humans aren't obsolete just yet...

Both humans are right - or at least, they use the apostrophe sensibly for the same of clarity - with m's

ChatGPT muddies things by adding inverted commas, 'm', and then gets into a twist with the plural, 'm's

Bard does a belt and braces job, capitalizing as well as using an apostrophe, M's

and both AI programs also add clarity by using the letter, letters.

That implies that both programs recognise that the question is about letters of the alphabet, and they have at least some grasp of the point that ms by itself may be unclear, so using an apostrophe, or capitalizing M, can help overcome that - though it's hardly necessary to do both, and using inverted commas as well as an apostrophe with the plural is unnecessarily complicated - 'm's' would be better, but unnecessarily fiddly.

Still, so far, not a bad effort, especially by Bard.

But having made quite a good fist of dealing with the tricky bit, how do they both fall flat on their virtual faces with the simple counting?

Last edited:

The organism that 'i' call 'me' is sentient. So, pretty certainly, are all vertebrates, and very probably in some sense all organisms that have ways of detecting, responding to, even modifying, what's going on around them - animals, plants, fungi, algae ... possibly even everything in the universe. But do they experience being subjects? Do they exercise free (unprogrammed, unpredetermined) choice? Do 'I'?Sentience..that’s it, I think.

That's the point I'm worrying at, essentially what I mean by 'subjectivity'. As I type, I don't think my brain's (just) recycling strings of words that are statistically likely to collocate with 'I am ... conscious', nor even, much more cleverly, but still by means of electrochemical signal-processing to which AI may be analogous, inferring rules for constructing meaningful utterances (as my brain has done since I started to recognise and use sounds that carry meaning in one particular language). I think that 'I' am actually choosing what to type, and could at any point 'change my mind' (as I often do, going back and revising what I've typed). Yet it seems that, at least potentially, AI could produce exactly the same posts, which would be indistinguishable from ones typed by a subject capable of self-reflection and free agency.You, as your post clearly shows, are capable of self-reflection.

Doragon

Tribune

Suppose we learn how to create life at some point in the future, like gods. And suppose we allow this life to evolve over a couple of million years. And then one of the many offspring over time learns how to type a sentence on a forum about being sentient or not. Did we create A.I.?Yet it seems that, at least potentially, AI could produce exactly the same posts, which would be indistinguishable from ones typed by a subject capable of self-reflection and free agency.

I think only a brain can truly simulate another brain. And a brain is not a machine, it is part of an organism, which is a colony of highly intricate cells and workings, too complex to recreate with mechanical and electronical parts. Don't think it's possible for the brain to be taken out of your head - it has branches throughout the entire body and is the result of millions of years evolving.

Humanity will likely not be around long enough to become gods.

captivecuties

Senator

Now, that hurts my head.The organism that 'i' call 'me' is sentient. So, pretty certainly, are all vertebrates, and very probably in some sense all organisms that have ways of detecting, responding to, even modifying, what's going on around them - animals, plants, fungi, algae ... possibly even everything in the universe. But do they experience being subjects? Do they exercise free (unprogrammed, unpredetermined) choice? Do 'I'?

That's the point I'm worrying at, essentially what I mean by 'subjectivity'. As I type, I don't think my brain's (just) recycling strings of words that are statistically likely to collocate with 'I am ... conscious', nor even, much more cleverly, but still by means of electrochemical signal-processing to which AI may be analogous, inferring rules for constructing meaningful utterances (as my brain has done since I started to recognise and use sounds that carry meaning in one particular language). I think that 'I' am actually choosing what to type, and could at any point 'change my mind' (as I often do, going back and revising what I've typed). Yet it seems that, at least potentially, AI could produce exactly the same posts, which would be indistinguishable from ones typed by a subject capable of self-reflection and free agency.

Not on a Saturday night.

Need pizza and beer.

Last edited:

fallenmystic

Tribune

Some of you may remember how I decided to leave CF in protest against a particular moderator's behaviour which I found highly inappropriate.

Even though she was expelled from the site recently, I still don't feel like returning since I was disappointed with how other moderators handled the issue, which has soured my relationship with some of them.

I hadn't visited CF since then but recently heard of this thread from another member. It piqued my curiosity since AI-generated art has been my primary interest nowadays.

However, I was disappointed to see much hatred against AI here instead of enthusiasm for it. I unironically believe the advent of AI-assisted image-generation technology was the best thing ever happened for the kinky art communities. Still, it's been ignored and even hated out of pure ignorance, which has annoyed me enough to return temporarily just for this discussion.

Of course, the FUD against AI is nothing new. Still, I find it particularly unfortunate because I know what it can do to fulfil the fantasies of those who love kinky art like myself. And that's why I'm writing this to persuade fellow kinksters that AI isn't a curse but the biggest blessing you've ever had.

I've been making kink renders for a long time as I started my journey with Daz3D in the days of Victoria and Michael 3, then moved on to Blender. I've spent much effort trying to find a way to approach photorealism in my renders with varying degrees of success.

But since I learned to use Stable Diffusion, I can now render photorealistic images precisely as I want, which would have been impossible with Daz3D, and in just a fraction of the time I would've needed with Blender.

I don't see myself as an artist and don't care if people call me as such or not. It's because what is important for me isn't the title but the ability to visualise my most secret fantasies and share them with others.

I know I cannot be the only person with such a desire. And I'm confident that AI can bring the same benefit to many others who enjoy or create kinky art, only if they can escape the common prejudice that has risen out of pure ignorance.

And that's why I'm writing this: to dispel myths like how AI cannot create something new but can only steal from human artists or how AI art is all about typing a few words in the prompt to generate some random images.

Please don't listen to people who spread such misinformation because they have little idea about how AI works or how people use it as a powerful artistic tool rather than some slot machine for random images.

And if you are an artist who relies on traditional tools like Daz3D, Blender, Photoshop, or even hand-drawing to create your art, you shouldn't feel intimidated by the advent of AI but should try to learn how it can enhance your existing workflow.

It will help you to make your own art - not some randomly generated images over which you have little control - in a completely different way which is easier and more powerful than what you're used to.

I won't delve into details because it's already been quite a long post. But I'm open to debate if anyone still doubts that AI can be anything more than some new toy with little to offer to artists or those who love their works.

I'll end this by attaching a few renders I've made with AI, not to boast but to prove that it's a tool you can use to express your fantasies in the way you want. I didn't make them by randomly typing prompts and hoping for the best but by employing various techniques to get the precise result I intended.

And if someone like I - who doesn't even consider oneself an artist - can do that, probably you can too.

PS: I had to downsample my renders because of the CF's restriction about AI images, which shows the widespread prejudice against the technology.

It's based on the assumption that AI-generated images have little artistic value and shouldn't be treated the same as "real" art like Daz3D renders.

And if you think the concern is about the bandwidth, not the artistic merit, consider how difficult it'd be to install Daz3D, make ten different renders of the base Genesis character in slightly different angles, and post the result here.

Even though she was expelled from the site recently, I still don't feel like returning since I was disappointed with how other moderators handled the issue, which has soured my relationship with some of them.

I hadn't visited CF since then but recently heard of this thread from another member. It piqued my curiosity since AI-generated art has been my primary interest nowadays.

However, I was disappointed to see much hatred against AI here instead of enthusiasm for it. I unironically believe the advent of AI-assisted image-generation technology was the best thing ever happened for the kinky art communities. Still, it's been ignored and even hated out of pure ignorance, which has annoyed me enough to return temporarily just for this discussion.

Of course, the FUD against AI is nothing new. Still, I find it particularly unfortunate because I know what it can do to fulfil the fantasies of those who love kinky art like myself. And that's why I'm writing this to persuade fellow kinksters that AI isn't a curse but the biggest blessing you've ever had.

I've been making kink renders for a long time as I started my journey with Daz3D in the days of Victoria and Michael 3, then moved on to Blender. I've spent much effort trying to find a way to approach photorealism in my renders with varying degrees of success.

But since I learned to use Stable Diffusion, I can now render photorealistic images precisely as I want, which would have been impossible with Daz3D, and in just a fraction of the time I would've needed with Blender.

I don't see myself as an artist and don't care if people call me as such or not. It's because what is important for me isn't the title but the ability to visualise my most secret fantasies and share them with others.

I know I cannot be the only person with such a desire. And I'm confident that AI can bring the same benefit to many others who enjoy or create kinky art, only if they can escape the common prejudice that has risen out of pure ignorance.

And that's why I'm writing this: to dispel myths like how AI cannot create something new but can only steal from human artists or how AI art is all about typing a few words in the prompt to generate some random images.

Please don't listen to people who spread such misinformation because they have little idea about how AI works or how people use it as a powerful artistic tool rather than some slot machine for random images.

And if you are an artist who relies on traditional tools like Daz3D, Blender, Photoshop, or even hand-drawing to create your art, you shouldn't feel intimidated by the advent of AI but should try to learn how it can enhance your existing workflow.

It will help you to make your own art - not some randomly generated images over which you have little control - in a completely different way which is easier and more powerful than what you're used to.

I won't delve into details because it's already been quite a long post. But I'm open to debate if anyone still doubts that AI can be anything more than some new toy with little to offer to artists or those who love their works.

I'll end this by attaching a few renders I've made with AI, not to boast but to prove that it's a tool you can use to express your fantasies in the way you want. I didn't make them by randomly typing prompts and hoping for the best but by employing various techniques to get the precise result I intended.

And if someone like I - who doesn't even consider oneself an artist - can do that, probably you can too.

PS: I had to downsample my renders because of the CF's restriction about AI images, which shows the widespread prejudice against the technology.

It's based on the assumption that AI-generated images have little artistic value and shouldn't be treated the same as "real" art like Daz3D renders.

And if you think the concern is about the bandwidth, not the artistic merit, consider how difficult it'd be to install Daz3D, make ten different renders of the base Genesis character in slightly different angles, and post the result here.

Attachments

captivecuties

Senator

I have nothing against any tool that a creator can use.Some of you may remember how I decided to leave CF in protest against a particular moderator's behaviour which I found highly inappropriate.

Even though she was expelled from the site recently, I still don't feel like returning since I was disappointed with how other moderators handled the issue, which has soured my relationship with some of them.

I hadn't visited CF since then but recently heard of this thread from another member. It piqued my curiosity since AI-generated art has been my primary interest nowadays.

However, I was disappointed to see much hatred against AI here instead of enthusiasm for it. I unironically believe the advent of AI-assisted image-generation technology was the best thing ever happened for the kinky art communities. Still, it's been ignored and even hated out of pure ignorance, which has annoyed me enough to return temporarily just for this discussion.

Of course, the FUD against AI is nothing new. Still, I find it particularly unfortunate because I know what it can do to fulfil the fantasies of those who love kinky art like myself. And that's why I'm writing this to persuade fellow kinksters that AI isn't a curse but the biggest blessing you've ever had.

I've been making kink renders for a long time as I started my journey with Daz3D in the days of Victoria and Michael 3, then moved on to Blender. I've spent much effort trying to find a way to approach photorealism in my renders with varying degrees of success.

But since I learned to use Stable Diffusion, I can now render photorealistic images precisely as I want, which would have been impossible with Daz3D, and in just a fraction of the time I would've needed with Blender.

I don't see myself as an artist and don't care if people call me as such or not. It's because what is important for me isn't the title but the ability to visualise my most secret fantasies and share them with others.

I know I cannot be the only person with such a desire. And I'm confident that AI can bring the same benefit to many others who enjoy or create kinky art, only if they can escape the common prejudice that has risen out of pure ignorance.

And that's why I'm writing this: to dispel myths like how AI cannot create something new but can only steal from human artists or how AI art is all about typing a few words in the prompt to generate some random images.

Please don't listen to people who spread such misinformation because they have little idea about how AI works or how people use it as a powerful artistic tool rather than some slot machine for random images.

And if you are an artist who relies on traditional tools like Daz3D, Blender, Photoshop, or even hand-drawing to create your art, you shouldn't feel intimidated by the advent of AI but should try to learn how it can enhance your existing workflow.

It will help you to make your own art - not some randomly generated images over which you have little control - in a completely different way which is easier and more powerful than what you're used to.

I won't delve into details because it's already been quite a long post. But I'm open to debate if anyone still doubts that AI can be anything more than some new toy with little to offer to artists or those who love their works.

I'll end this by attaching a few renders I've made with AI, not to boast but to prove that it's a tool you can use to express your fantasies in the way you want. I didn't make them by randomly typing prompts and hoping for the best but by employing various techniques to get the precise result I intended.

And if someone like I - who doesn't even consider oneself an artist - can do that, probably you can too.

PS: I had to downsample my renders because of the CF's restriction about AI images, which shows the widespread prejudice against the technology.

It's based on the assumption that AI-generated images have little artistic value and shouldn't be treated the same as "real" art like Daz3D renders.

And if you think the concern is about the bandwidth, not the artistic merit, consider how difficult it'd be to install Daz3D, make ten different renders of the base Genesis character in slightly different angles, and post the result here.

In fact, am thinking of dabbling in 3D myself and am still learning.

I think, at times, discussions go off on tangents.

Thanks for your thoughts, fm: I'm happy to see you posting again, and I agree those images are excellent - I love 'em!PS: I had to downsample my renders because of the CF's restriction about AI images

The limit on image size applies to all 'non-original' artwork. It's proved necessary (and effective) to save us from being unable to pay the costs of keeping the Forums running. Of course the question whether AI images are or aren't 'original' is debatable (unlike the need to pay the bills!), and we'll keep the issue under review - but note the rider to the relevant Rule:

The 400 KB limit now applies to AI generated images.

However artists may incorporate AI generated elements into their creations which come under the 2MB limit.

fallenmystic

Tribune

Thanks for your thoughts, fm: I'm happy to see you posting again, and I agree those images are excellent - I love 'em!

The limit on image size applies to all 'non-original' artwork. It's proved necessary (and effective) to save us from being unable to pay the costs of keeping the Forums running. Of course the question whether AI images are or aren't 'original' is debatable (unlike the need to pay the bills!), and we'll keep the issue under review - but note the rider to the relevant Rule:

The 400 KB limit now applies to AI generated images.

However artists may incorporate AI generated elements into their creations which come under the 2MB limit.

Thanks for your kind words. It feels good to be greeted by a familiar name again

I understand the need for such a restriction, but I still feel it unreasonable to have it enforced differently on AI-generated images.

Maybe it's inevitable that we need some time before we can assess the benefits and dangers of new technology to establish sensible rules about it. Still, I can't help but feel that the current rule about AI-generated images is largely based on a misunderstanding of the technology.

I spent several hours rendering each image I posted above. And two of them were made entirely with Stable Diffusion, while I used a Blender render and a crude hand drawing as a basis for the others (can anyone tell which is which?).

According to the present rule, only the latter two would be deemed "original" enough to be allowed for posting without downsampling when I spent at least as much time and effort creating them as I did for the others.

And what counts as "original" anyway? Are my renders not original just because I use AI as my tool of choice instead of Daz3D, for example, when only 0.001% of its users create original models instead of purchasing from the store?

Probably it was from the fear that AI would allow people to spam the forum with low-effort/low-quality images that such a rule was deemed necessary.

But if you think about it, CF doesn't even enforce such a size restriction for random images found on the internet as long as they get posted in one of the "curated collection" threads.

I suppose it's because the moderators trust most members would show discretion in such a matter. Then why can't we assume the same for those who use AI to generate their art?

I can't imagine any reason other than such an assumption that AI is not a legitimate method for creating original art or that those who use it are more prone to abuse it. I don't think it's from any mal intention, but it's still a misunderstanding and prejudice, nevertheless.

As such, I hope CF can review and revise the current rule regarding AI-generated images so that those who use AI as a tool of their choice to share the result of their creativity on equal footing with those who use more traditional means.

Last edited:

Doragon

Tribune

However, I was disappointed to see much hatred against AI here instead of enthusiasm for it.

Thanks for explaining your position, but don't take my lack of enthusiasm as hatred. And also don't take it personal. I am not obliged to like something because you are enthusiastic about it, and if I don't like the art you make, it's also not a rejection of you as an artist.

When I see the images you posted, I can see why you are enthusiastic and I understand the possibilities. And yet it raises another concern, which is an ethical one - maybe the entire discussion is ethical as a matter of fact. Something that has not been addressed in this thread, and comes to mind seeing your images, is the notion of reality. The images you present are so good, it's hard to distinguish them from real photographs. Normal drawings, paintings, photo-collages and even Daz3d images are obvious fantasies. A far as fantasies go, a lot is allowed on this forum, although the rest of society might frown upon it. And even here there are limits to which fantasies are allowed, like the ban on images portraying pedofilia. I wonder what happens when the fantasies become so real, they look like actual photographs of actual situations. For instance, creating a picture of a real woman being crucified, without her actualconsent and without being able to see it was not a real crucifixion. Would that not raise concern?

The same discussion is also conducted regarding deepfake footage. Is it allowed to create fake porn movies with celebrities in them? Or have a political figure giving a speech he or she has never given?

And is the line only when using famous faces and using celebrities? Or is it also wrong to create art of a woman being tortured to death, when the woman doesn't exist but the art looks so real it appears to be a real execution?

Where do we draw the line and why?

fallenmystic

Tribune

To be fair, Mystic, the way you use AI to create art is not the same way most (like me) do. I think your work has strong standing to be considered "original".

I tried to avoid debating such an abstract question as "Can an AI-generated image be called a piece of art?" because I thought it'd add to the confusion rather than clear it. But maybe it won't be without benefit if I clarify my position on this matter.

In short, I don't believe the choice of medium can determine whether or not some creation is art. Instead, art is about expressing one's vision in a way that can evoke the same kind of emotion in the author and the audience.

In such a definition, anything can be a legitimate medium of art if it allows the author enough agency and expressive freedom to achieve such a goal.

For example, splashing a bucket of paint over a canvas without any particular intention cannot be called an act of art, even though it involves a medium traditionally favoured by artists.

On the other hand, even an act of wounding thread around nails can be a legitimate art if you can produce something like this:

Granted, AI allows anyone to generate images without artistic talent or intention. But the same can be said of digital cameras. Even though anyone can easily take selfies without any creative talent, it doesn't preclude the possibility that it can be an excellent artistic tool in the hand of a professional photographer.

As such, I believe that rejecting AI as a legitimate art medium just because anyone can use it to generate low-quality outputs is as absurd as arguing that digital photography cannot be art because anyone can press the shutter.

malins

Stumbling Seeker

I've been occasionally looking into the ai-art channel on the discord so yeah, I see how you've been creating these from the ground up.to prove that it's a tool you can use to express your fantasies in the way you want.

That there is very definitely a creative process going on.

And obviously besides diving deep into the technology, gathering experience etc. it also requires a discerning eye and good visual sense.

I think a lot of people don't realise there is any other application of these generative technologies than "go to a website and type a prompt".

Anyway regarding some of the ChatGPT comments

That implies that both programs recognise that the question is about letters of the alphabet, and they have at least some grasp of the point that ms by itself may be unclear, ...

But having made quite a good fist of dealing with the tricky bit, how do they both fall flat on their virtual faces with the simple counting?

I think what often happens is that we look at ChatGPT and its responses and implicitly assume that this is in fact supposed to be, "Artificial General Intelligence".

Only so long as we assume that, does it seem surprising that the "Intelligence" is sometimes so seemingly "stupid".

Now some years back there was already a bit of a flurry of reporting around an older language model (I think it was GPT2) but at the time hardly anybody seemed to fall into that trap.

It was just seen as a text generator.

The reason might be, that the newer models are so good at creating text, that we emotionally tend to infer there ought to be an actual mind behind it and get disappointed when it turns out it's not a general purpose thought engine!

i.e. ... an artifical mind that parses a natural language question in English, into sequences of logic and meaning, extracts any possible task it's asked to do, mentally executes the task (even if it is as simple as counting letters), reaches a conclusion/result, and then formulates this conclusion again into natural human readable language.

This is encouraged by the loose usage of the term artificial intelligence.

Hence we expect it to "recognise" what a question is, have a "grasp" of things and obviously be able to execute tasks like counting.

But these things just don't process language like we do.

From an earlier version of ChatGPT:

You can see the token system glitching there.

(For anyone more deeply interested start here https://www.lesswrong.com/posts/aPe...arp-plus-prompt-generation#The_plot_thickens_ )

This isn't the way anyone even a foreign speaker with limited vocabulary would try to parse an apparently made-up term like SolidGoldMagikarp. You wouldn't mistake it for being identical with "distribute". You'd likely guess it's a carp, that's magic, and made of solid gold, and perhaps guess that it's an item from a video game or so.

The point of this example isn't to make fun of how bad ChatGPT is -- The point is to see how utterly alien it is in its way of arriving at 'language generation'.

This has nothing to do with semantics or any set of theories in linguistics about how humans learn language (and therefore it's also ridiculous that some people are using it in an attempt to 'disprove' certain theories or make statements of linguistics)

And considering like that I find it quite amazing that it creates so much text that seems understandable to humans.

This also says something about us.

And how we project intent, meaning, and the assumption of consciousness into things...

Also we should equally consider that not all language generated by humans comes out of deep logical thought!

No it is actually what should be expected and as intended by the idea of a language model I'd say!But then how did the incident I mentioned above with the lawyer happen? The cases the bot cited don't exist so it didn't find them in a database.. That it would invent them from whole cloth really is quite concerning.

The accomplishment is that the model can create something which "sounds and is structured like something that would fulfil the expectation of what a (court filing | scientific paper | crime fiction | sermon | address to the union ...) should look like".

As the NYT article mentions, the lawyer using ChatGPT "was unaware of the possibility that its content could be false. He had, he told Judge Castel, even asked the program to verify that the cases were real. It had said yes."

Being true & right isn't it's job. Of course it would ouput 'yes!'

Imagine someone is writing a story or movie or storyboard for an adventure game etc. where they wanted to cite from fictional court filings from a case that plays a role in the story. Imagine maybe also that they're writing the story in a non-English language but because of the situation in the story the court cases are supposed to take place in the US and the writer is neither familiar with the US court system nor legal English. ChatGPT will be able to generate convincing enough sounding US English legalese to be used as snippets, quotes, screenshots, etc. to give a realistic seeming background.

'Passable-sounding generated legalese' will usually contain references to prior court decisions.

Just like 'passable sounding generated scientific writing' will contain citations from studies in academic journals.

So, if Chat GPT's job is to generate 'text that sounds right' it needs to contain these decisions & citations and so it's ChatGPT's job to put them in there. Whether they actually exist is not really the point though the inverse conclusion also isn't right, it doesn't always make everything up.

Now anyone who expects the 'OpenAI' company has delivered to the public, for free, an artifical mind that out of the box can replace any profession which has writing as its ouptut ... it's a bit of a letdown.

But if you understand it as showing off their tech, (and meanwhile collecting tons of usage data and feedback valuable for them), it's another story.

Umm I'm pretty sure the idea isn't to use ChatGPT for thisthe bots can read the entirety of the medical literature, including obscure case reports, papers in foreign languages, etc., which no human can do. They will find some match of a puzzling set of symptoms to something out there and solve the puzzle. But if it simply makes shit up, I'll stay with a human doctor going on their experience

ChatGPT is not the only possible application of machine learning and in fact a lot of this has been going on for quite a while.

And of course the idea is to highlight possible connections and then have them investigated.

Last edited:

fallenmystic

Tribune

Thanks for explaining your position, but don't take my lack of enthusiasm as hatred. And also don't take it personal. I am not obliged to like something because you are enthusiastic about it, and if I don't like the art you make, it's also not a rejection of you as an artist.

When I see the images you posted, I can see why you are enthusiastic and I understand the possibilities. And yet it raises another concern, which is an ethical one - maybe the entire discussion is ethical as a matter of fact. Something that has not been addressed in this thread, and comes to mind seeing your images, is the notion of reality. The images you present are so good, it's hard to distinguish them from real photographs. Normal drawings, paintings, photo-collages and even Daz3d images are obvious fantasies. A far as fantasies go, a lot is allowed on this forum, although the rest of society might frown upon it. And even here there are limits to which fantasies are allowed, like the ban on images portraying pedofilia. I wonder what happens when the fantasies become so real, they look like actual photographs of actual situations. For instance, creating a picture of a real woman being crucified, without her actualconsent and without being able to see it was not a real crucifixion. Would that not raise concern?

The same discussion is also conducted regarding deepfake footage. Is it allowed to create fake porn movies with celebrities in them? Or have a political figure giving a speech he or she has never given?

And is the line only when using famous faces and using celebrities? Or is it also wrong to create art of a woman being tortured to death, when the woman doesn't exist but the art looks so real it appears to be a real execution?

Where do we draw the line and why?

I don't have any problem if anyone hates AI-assisted art because it's a matter of taste. But arguing that something cannot be art based on a misunderstanding or prejudice is another matter.

I'm afraid I have to remind you how you started this thread. You didn't just say that you dislike - or feel "disgusted", as you put it - AI-assisted art, but also argued how it's a "fraud" and better be banned from CF.

While I fully respect your personal preference, your argument against AI-assisted art is based on the assumption that the human author doesn't have much agency in the creation process, which is factually wrong.

The reason why I posted some of my renders was to refute this point so that I can show how it's possible to control AI to express one's own vision instead of something a machine randomly generates. I hope it has cleared any misconception about the matter by now.

Regarding your concern about the possibility of abuse, I try to avoid depicting real people in my kink renders because I'm firmly against such a practice.

But the same danger is also present in more traditional media. For example, one can easily make photo manipulation in Photoshop, but I haven't heard anyone arguing that images processed with that software must be banned from CF for that reason.

And it's possible to achieve photorealism with traditional tools like Blender, and even Daz3D has been improving the quality of its renderer over the years. And I doubt if people would insist on banning Daz3D renders from CF for being "unethical" once it reaches the level of Blender in the near future.

Last edited: